This is an excellent book. It is an attempt to distil the key messages from the vast array of studies that have been undertaken across the world into all the different factors that lead to educational achievement. As you would hope and expect, the book contains details of the statistical methodology underpinning a meta-analysis and the whole notion of ‘effect size’ that drives the thinking in the book. There is a discussion about what is measurable and how effect size can be interpreted in different ways. The key outcomes are interesting, suggesting a number of key factors that are likely to make the greatest impact in classrooms and more widely in the lives of learners.

My main interest here is to explore what Hattie says about homework. This stems from a difficulty I have when I hear or read, fairly often, that ‘research shows that homework makes no difference’. It is cited as a hard fact in articles such as this one by Tim Lott in the Guardian: Why do we torment kids with homework? Even though Tim is talking about his 6 year old, and cites research that refers to ‘younger kids’, too often the sweeping generalisation is applied to all homework for all students. It bugs me and I think it is wrong.

I have written about my views on homework under the heading ‘Homework Matters: Great Teachers set Great Homework’ . I’ve said that all my instincts as a teacher (and a parent) tell me that homework is a vital element in the learning process; reinforcing the interaction between teacher and student; between home and school and paving the way to students being independent autonomous learners. Am I biased? Yes. Is this based on hunches and personal experience? Of course. Is it backed up by research……? Well that is the question.

So, what does Hattie say about homework?

Helpfully he uses Homework studies as an example of the overall process of meta-analyses, so there is plenty of material. In a key example, he describes a study of five meta-analyses that capture 161 separate studies involving over 100,000 students as having an effect size d= 0.29. What does this mean? This is the best typical effect size across all the studies, suggesting:

- improving the rate of learning by 15% – or advancing children’s learning by about a year

- 65% of effects were positive

- 35% of effects were negative

- average achievement exceeded 62% of the levels of students not given homework.

However, there are other approaches such as the ‘common language effect’ (CLE) that compares effects from different distributions. For homework a d= 0.29 effect translates into a 21% chance that homework will make a positive difference. Or, from two classes, 21 times out of a 100, using homework will be more effective. Hattie then says that terms such as ‘small, medium and large’ need to be used with caution in respect of effect size. He is ambitious and won’t accept comparison with 0.0 as a sign of a good strategy. He cites Cohen as suggesting with reason that 0.2 is small, 0.4 is medium and 0.6 is large and later argues himself that we need a hinge-point where d > 0.4 is needed for an effect to be above average and d > 0.6 to be considered excellent.

OK. So what is this all saying. Homework, taken as an aggregated whole, shows an effect size of d= 0.29 that is between small and medium? Oh.. but wait… here comes an important detail. Turn the page: The studies show that the effect size at Primary Age is d = 0.15 and for Secondary students it is d = 0.64! Well, now we are starting to make some sense. On this basis, homework for secondary students has an ‘excellent’ effect. I am left thinking that, with a difference so marked, surely it is pure nonsense to aggregate these measures in the first place?

Hattie goes on to report that other factors make a difference to the results: eg when what is measured is very precise (eg improving addition or phonics), a bigger effect is seen compared to when the outcome is more ephemeral. So, we need to be clear: what is measured has an impact on the scale of the effect. This means that we have to throw in all kinds of caveats about the validity of the process. There will be some forms of homework more likely to show an effect than others; it is not really sensible to lump all work that might be done in between lessons into the catch-all ‘homework’ and then to talk about an absolute measure of impact. Hattie is at pains to point out that there will be great variations across the different studies that simply average out to the effect size on his barometers. Again, in truth, each study really needs to be looked at in detail. What kind of homework? What measure of attainment? What type of students? And so on…. so many variables that aggregating them together is more or less made meaningless? Well, I’d say so.

Nevertheless, d= 0.64! That matches my predisposed bias so I should be happy. q.e.d. Case closed. I’m right and all the nay-sayers are wrong. Maybe, but the detail, as always, is worth looking at. Hattie suggests that the reason for the difference between the d=0.15 at primary level at d=0.64 at secondary is that younger students can’t under take unsupported study as well, they can’t filter out irrelevant information or avoid environmental distractions – and if they struggle, the overall effect can be negative.

At secondary level he suggests there is no evidence that prescribing homework develops time management skills and that the highest effects in secondary are associated with rote learning, practice or rehearsal of subject matter; more task-orientated homework has higher effects that deep learning and problem solving. Overall, the more complex, open-ended and unstructured tasks are, the lower the effect sizes. Short, frequent homework closely monitored by teachers has more impact that their converse forms and effects are higher for higher ability students than lower ability students, higher for older rather than younger students. Finally, the evidence is that teacher involvement in homework is key to its success.

So, what Hattie actually says about homework is complex. There is no meaningful sense in which it could be stated that “the research says X about homework” in a simple soundbite. There are some lessons to learn:

The more specific and precise the task is, the more likely it is to make an impact for all learners. Homework that is more open, more complex is more appropriate for able and older students.

Teacher monitoring and involvement is key – so putting students in a position where their learning is too complex, extended or unstructured to be done unsupervised is not healthy. This is more likely for young children, hence the very low effect size for primary age students.

All of this makes sense to me and none of it challenges my predisposition to be a massive advocate for homework. The key is to think about the micro- level issues, not to lose all of that in a ridiculous averaging process. Even at primary level, students are not all the same. Older, more able students in Year 5/6 may well benefit from homework where kids in Year 2 may not. Let’s not lose the trees for the wood! Also, what Hattie shows is that educational inputs, processes and outcomes are all highly subjective human interactions. Expecting these things to be reduced sensibly into scientifically absolute measured truths is absurd. Ultimately, education is about values and attitudes and we need to see all research in that context.

PS. If you are reading this from Sweden, Tack för läsning. Låt mig veta era tankar om denna fråga.

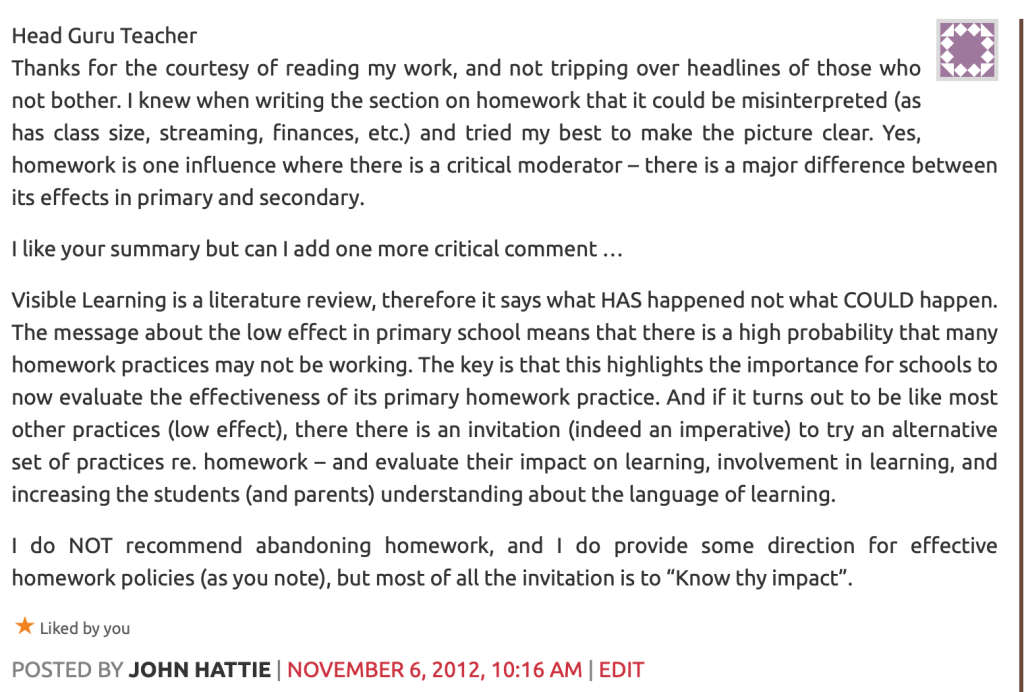

Update: Note that Hattie himself has commented on this blog post: https://teacherhead.com/2012/10/21/homework-what-does-the-hattie-research-actually-say/comment-page-1/#comment-536

(Slides from a Teach First session on homework are here: Teach First Homework)

See also Setting Great Homework: The Mode A:Mode B approach.

[…] See Tom Sherrington’s (@HeadGuruTeacher) discussion of some of the research on homework in his blog-post here. […]

LikeLike

“The biggest mistake Hattie makes is with the CLE statistic that he uses throughout the book. In ‘Visible Learning, Hattie only uses two statistics, the ‘Effect Size’ and the CLE (neither of which Mathematicians use).

The CLE is meant to be a probability, yet Hattie has it at values between -49% and 219%. Now a probability can’t be negative or more than 100% as any Year 7 will tell you.”

https://ollieorange2.wordpress.com/2014/08/25/people-who-think-probabilities-can-be-negative-shouldnt-write-books-on-statistics/

LikeLike

[…] at the evidence on homework from Hattie, we’re committed to setting homework but need to be mindful that only certain types of […]

LikeLike

[…] Hattie has thrown some doubt over the effectiveness of homework as an intervention, wouldn’t it be better to, as Tom […]

LikeLike

Nice post… Though, here homework is to target students who are a bit older. Pupils at elementary level or less than 4years may not be taken serious on assignment issue.

LikeLiked by 1 person

[…] mentions a couple of bits of research in his post regarding the effectiveness of homework on learning and although some studies suggest a […]

LikeLike

[…] of analogies with outdoor pursuits tasks like learning to abseil. If you look at the detail – for example the homework chapter as I did here – you also learn about the complexities of education research itself and the importance of […]

LikeLike

[…] Homework: What does the Hattie research actually say? […]

LikeLike

[…] as an example of the pitfalls of interpreting the results. I’ve written about this in full in this post. It was interesting to see, in a ranking of the Visible Learning effect sizes, that Drugs appears […]

LikeLike

I’m reading this from Sweden (although I am originally from the UK and trained to teach there). 7 years ago I did a piece of small action research as part of my masters degree looking at how I used homework. I was in the UK at the time. My findings, although much smaller scale, would support Hattie. Homework has an impact but you must design it properly was my basic conclusion. My problem is having to deal with the huge number of parent conversations and societal attitude towards homework in Sweden (which are generally negative), and school in general really, fuelled by the media and it’s anti-homework stance. My own feeling about the attitude is that academic learning should happen only in school. Even getting parents to do something so simple as read with their child can cause endless arguments. No it’s not all parents and it does depend a lot on your location. But that’s just my experience of the schools I have worked in.

LikeLike

Hi LUNATIKSCIENCE,

I am currently in the process of looking into a whole school home learning policy and I would be really interested to read the work you did. I have been trying to read as much research into home learning as possible, but getting some actual data would be great.

Would you be able to share any additional information in regards to your findings?

Many thanks

Alasdair

LikeLike

Hi, sorry just noticed this comment. I can send you my paper that I wrote if you are still interested.

LikeLike

Yes please. That would be so useful.

alightbody@jess.sch.ae

Thanks very much.

Alasdair

LikeLiked by 1 person

Hi,

I would also be really interested if you were happy to share your research. I am DH working in a Prep school that is in the midst of analysing our approach to homework.

Thanks

LikeLike

[…] of analogies with outdoor pursuits tasks like learning to abseil. If you look at the detail – for example the homework chapter as I did here – you also learn about the complexities of education research itself and the importance of […]

LikeLike

[…] I posted a link to a pro-homework argument. Again today, I’ve stumbled across another–this one summarizing John Hattie’s Visible Learning on the […]

LikeLike

Reblogged this on The Maths Mann.

LikeLike

[…] When preparing for our leadership planning day yesterday, I was investigating how to build on-going professional teaching conversations (as an alternative to Performance Review) that I stumbled upon John Hattie again talking up collective teacher efficacy on the Principal Centre Radio podcast. If you are not familiar with Hattie, his name is rarely far from discussions about teacher effectiveness… Visible Learning, 1400 meta-analyses, 80,000 studies, 300 million students… what works best in education (still, his chosen research approach, meta-analysis, is now without its detractors or straightforward teacher criticism.) […]

LikeLike

[…] Feel free to leave comments/thoughts between meetings here e.g. Sam sent me this link: Homework: What does the Hattie research actually say? […]

LikeLike

But you make the assumption that educational achievement is per se, the only thing affected. Easy to see from the teacher /school perspective. But from the parent /home perspective, there may be many more valuable activities going on that are much more important than homework, to the growth of the human being. So these things need to be taken into account too. Where homework detracts from the time spent on these, then it could be good from a school education point of view but bad from a more all – round education point of view.

LikeLike

[…] Homework: What does the research say about its effectiveness? […]

LikeLike

[…] of the problem is that the research on homework, although plentiful, is unclear. In his post Homework: What does the Hattie research actually say? blogger, author of The Learning Rainforest and education consultant Tom Sherrington unpicks the […]

LikeLike

[…] I have explored issues with homework in various different posts. In particular, the research into homework by John Hattie is covered in detail in this post: Homework: What does the Hattie research actually say? […]

LikeLike

[…] Homework: What does the Hattie research actually say? […]

LikeLike

This is an excellent summary of Hattie’s work and gives us all good for thought about what could be meaningful and helpful and what to avoid when considering homework.

LikeLike

This is an excellent summary of Hattie’s work and gives us all good for thought about what could be meaningful and helpful and what to avoid when considering homework.

LikeLiked by 1 person

[…] ‘Homework: What does the Hattie research actually say?’ by Tom Sherrington. It’s important to keep in mind that all the research around homework applies to remote learning: Specific and precise tasks are more successful than longer tasks that involve complex problem solving, higher ability students benefit more than lower ability students, older students benefit more than younger students, and teacher monitoring is crucial. […]

LikeLike

[…] is more crucial for novices and less effective as students gain expertise, and homework has little impact on educational outcomes, particularly for young […]

LikeLike

[…] a lack of evidence is not the same as evidence that an approach is not successful. This echoes the comments Hattie made here about Visible Learning: “Visible Learning is a literature review, therefore it says what HAS happened not what COULD […]

LikeLike